Software Defined Radio with Spread spectrum and SOQPSK for Telemetry applications

Deeply Embedded AI Accelerator for Microcontrollers and End-Point IoT Devices

Our unique differentiation starts with the ability to simultaneously execute multiple AI/ML models significantly expanding the realm of capability over existing approaches. This game-changing advantage is provided by the co-developed NeuroMosAIc Studio software’s ability to dynamically allocate HW resources to match the target workload resulting in highly optimized, low-power execution. The designer may also select the optional on-device training acceleration extension enabling iterative learning post-deployment. This key capability cuts the cord to cloud dependence while elevating the accuracy, efficiency, customization, and personalization without reliance on costly model retraining and deployment, thereby extending device lifecycles.

View Deeply Embedded AI Accelerator for Microcontrollers and End-Point IoT Devices full description to...

- see the entire Deeply Embedded AI Accelerator for Microcontrollers and End-Point IoT Devices datasheet

- get in contact with Deeply Embedded AI Accelerator for Microcontrollers and End-Point IoT Devices Supplier

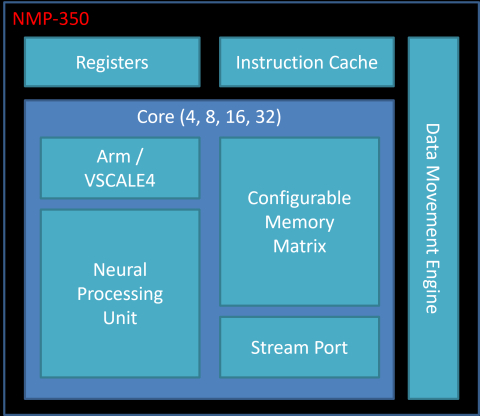

Block Diagram of the Deeply Embedded AI Accelerator for Microcontrollers and End-Point IoT Devices

AI IP

- RT-630-FPGA Hardware Root of Trust Security Processor for Cloud/AI/ML SoC FIPS-140

- NPU IP family for generative and classic AI with highest power efficiency, scalable and future proof

- NPU IP for Embedded AI

- Tessent AI IC debug and optimization

- AI accelerator (NPU) IP - 16 to 32 TOPS

- Complete Neural Processor for Edge AI